대외활동

[혼공학습단 8기] 혼자 공부하는 머신러닝+딥러닝 4주차

호오우

2022. 7. 29. 13:31

기본미션

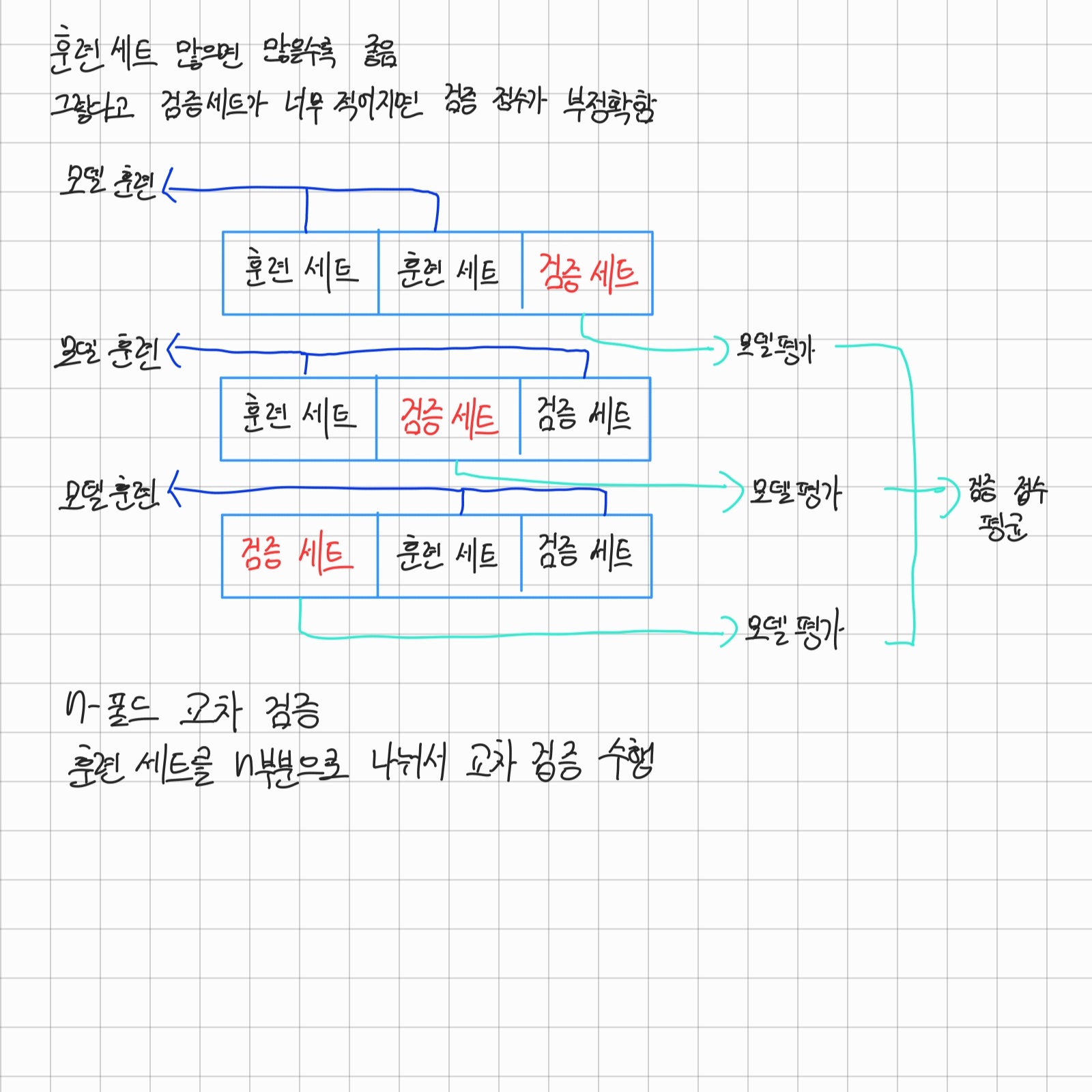

훈련세트는 많으면 많을수록 좋다고 한다.

하지만 그렇다고 검증세트를 줄이고 훈련세트를 늘린다면

정확한 모델이 만들어지지 않을 것이다.

이를 해결하기 위해 나온 방법이 N-폴드 교차 검증이다.

선택미션

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

wine = pd.read_csv('https://bit.ly/wine_csv_data')

data = wine[['alcohol', 'sugar', 'pH']].to_numpy()

target = wine['class'].to_numpy()

train_input, test_input, train_target, test_target = train_test_split(data, target, test_size=0.2, random_state=42)

from sklearn.model_selection import cross_validate

from sklearn.ensemble import RandomForestClassifier

rf = RandomForestClassifier(n_jobs=-1, random_state=42)

scores = cross_validate(rf, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))

rf.fit(train_input, train_target)

print(rf.feature_importances_)

rf = RandomForestClassifier(oob_score=True, n_jobs=-1, random_state=42)

rf.fit(train_input, train_target)

print(rf.oob_score_)

from sklearn.ensemble import ExtraTreesClassifier

et = ExtraTreesClassifier(n_jobs=-1, random_state=42)

scores = cross_validate(et, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))

et.fit(train_input, train_target)

print(et.feature_importances_)

from sklearn.ensemble import GradientBoostingClassifier

gb = GradientBoostingClassifier(random_state=42)

scores = cross_validate(gb, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))

gb = GradientBoostingClassifier(n_estimators=500, learning_rate=0.2, random_state=42)

scores = cross_validate(gb, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))

gb.fit(train_input, train_target)

print(gb.feature_importances_)

from sklearn.ensemble import HistGradientBoostingClassifier

hgb = HistGradientBoostingClassifier(random_state=42)

scores = cross_validate(hgb, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))

from sklearn.inspection import permutation_importance

hgb.fit(train_input, train_target)

result = permutation_importance(hgb, train_input, train_target, n_repeats=10,

random_state=42, n_jobs=-1)

print(result.importances_mean)

from sklearn.inspection import permutation_importance

hgb.fit(train_input, train_target)

result = permutation_importance(hgb, train_input, train_target, n_repeats=10,

random_state=42, n_jobs=-1)

print(result.importances_mean)

result = permutation_importance(hgb, test_input, test_target, n_repeats=10,

random_state=42, n_jobs=-1)

print(result.importances_mean)

hgb.score(test_input, test_target)

from xgboost import XGBClassifier

xgb = XGBClassifier(tree_method='hist', random_state=42)

scores = cross_validate(xgb, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))

from lightgbm import LGBMClassifier

lgb = LGBMClassifier(random_state=42)

scores = cross_validate(lgb, train_input, train_target, return_train_score=True, n_jobs=-1)

print(np.mean(scores['train_score']), np.mean(scores['test_score']))